Introduction

We are now several days into another year of Azure Back to School, I hope you’ve enjoyed the content so far as much as I have, and thanks again to the team for organising and having me back for another year, I can’t wait for the rest of the month. Check it all out over at – https://azurebacktoschool.github.io/

This year, I’m going to take a look at some of the challenges that BCDR (Business Continuity and Disaster Recovery) can pose to Azure Networking. This is something I have seen pop up quite a lot recently, as companies move to solidify their footprint, close gaps, and make use of all Azure has to offer to “keep the lights on”.

High-Level Architecture

As with many of my articles like this, it is important to call out the scope of the discussion. Azure is a vast platform, and I will be the first to say that every environment is unique. As such, this doesn’t aim to be an exhaustive, several thousand word long piece covering every scenario. For the sake of discussion, it will focus on these core network services – Virtual Network, Virtual Network Gateway, Azure Firewall, Network Security Group, Route Table, and Public IP.

Core BCDR Components

In a similar introductory fashion, it is also important to highlight the Azure BCDR relevant concepts that are included in discussion. Essentially an understanding of what an Azure region is, and what Availability Zones are will cover you here.

Network arch and scenario to cover outage

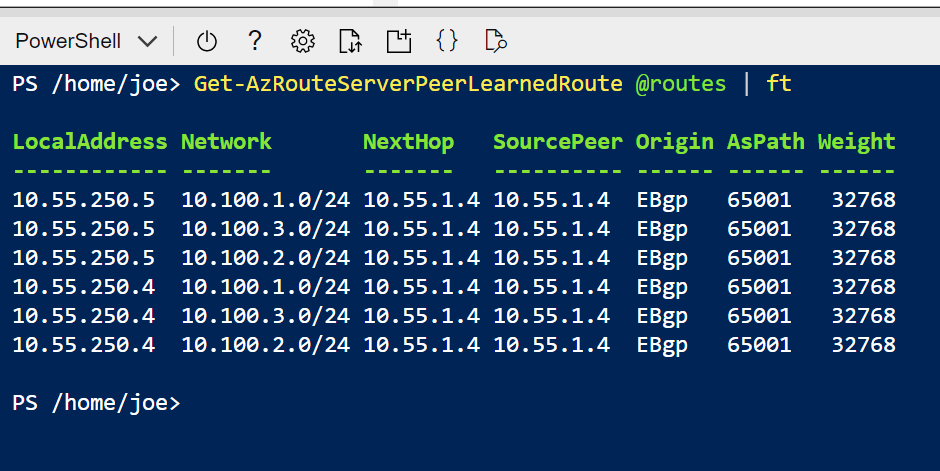

OK, so let’s look at a typical production network setup in Azure. Heading over to the Azure Architecture Center, we can find some excellent materials and guides, however, we’re going to focus on this one – Hub-Spoke

As you can see from the diagram, this visually includes several services I have mentioned, some secondary, like Public IP addresses, are not there explicitly, but we all know that Bastion, Firewall and VPN Gateway require one.

Network Services Alignment

So let’s look at where these services align to core Azure BCDR requirements. One thing to note here is that Azure divides its services up into different categories based on their regional availability by design:

- Foundational – Available in all recommended and alternate regions when a region is generally available, or within 90 days of a new foundational service becoming generally available.

- Mainstream – Available in all recommended regions within 90 days of a region’s general availability. Mainstream services are demand-driven in alternate regions, and many are already deployed into a large subset of alternate regions.

- Strategic – Targeted service offerings, often industry-focused or backed by customized hardware. Strategic services are demand-driven for availability across regions, and many are already deployed into a large subset of recommended regions

It also then divides how Azure services support Availability Zones:

- Zonal – A resource can be deployed to a specific, self-selected availability zone to achieve more stringent latency or performance requirements. Resiliency is self-architected by replicating applications and data to one or more zones within the region. Resources are aligned to a selected zone.

- Zone-Redundant – Resources are replicated or distributed across zones automatically. Think ZRS Storage Account as an example.

- Always-Available – Always available across all Azure geographies and are resilient to zone-wide outages and region-wide outages.

Finally, before we get to our specific services, remember that not all Azure regions are equal. Some have all services, some don’t. Some support Availability Zones, some don’t. Make sure you are confirming your requirements against your proposed region – every time – as updates happen quickly!

Ok, so onto our specific network services and how they align:

- Virtual Network – Foundational & Zone-Redundant

- Virtual Network Gateway – Foundational & Zone-Redundant*

- Azure Firewall – Mainstream & Zonal & Zone-Redundant*

- Network Security Group – Foundational & Zone-Redundant

- Route Table – Foundational & Zone-Redundant

- Public IP – Foundational & Zonal & Zone-Redundant*

*SKU dependent, not all SKUs have the same feature set.

First thing that you should notice, is that all of these Networking services have really strong coverage for BCDR. However, not one of them is regionally resilient. That means regardless of our in-region, zonal design, we may need additional regional configuration and deployment, depending on your requirements.

Let’s look at within a single region first, using our same example deployment architecture. Within a fully supported region, (remember, always check!) such as North Europe, we can deploy the entire architecture to be zone-redundant. This means that should an entire zone be lost, our network services will stay active. This is the equivalent of a 99.99% SLA in Azure terms. Obviously this requires some small tweaks during deployment to achieve, and a slight uptick in cost due to SKU requirements, but this is honestly an excellent baseline to work from.

Challenges

One challenge here, I am not aware of a service that allows you to modify zonal deployment/configuration after deployment. You must do it at deployment. This means if you’re approaching an existing environment with this in mind, you might have quite a few maintenance windows and rebuilds etc. Bicep is your friend here for testing and deployment.

Obviously we then have the regional challenge. And by challenge, I guess that ultimately means if you need your services, should a region go down, how do you deal with that in advance? When it comes to networking in Azure, there is no replication service, or tick box to make it multi-region. Why not you ask? That’s for a different post, but let’s look at what is needed.

Generally you would deploy several elements ahead of time, when it comes to networking as per our example design. You could in fact deploy the whole thing, if you have the budget for Azure Firewall in both regions. The network would then be viewed as a hot secondary, allowing you to run individual workloads there permanently, or as part of testing. By deploying these elements ahead of time, it greatly reduces your RTO times, and if you have VMs, you will definitely need at least the Virtual Network as a target for Azure Site Recovery. Again, Bicep can really help here, but ultimately I would recommend having everything within budget deployed ahead of time. Small items, like where a Public IP is on an allow-list, catch you with BCDR. Azure only allocates these on deployment (if a Prefix, and if you’re not using prefixes, why not!?), so get them deployed and added to your vendors etc ahead of time. Similarly, you can plan and runbook changes required based on existing configuration.

Unavoidable Issues

With zone redundancy deployments, I would call out two issues and they have already been highlighted in brief. It has to be actioned at time of deployment, and SKU costs. Configuration wise, for networking it’s fairly simple and shouldn’t pose challenges.

With regional redundancy, there are quite a few more. A lot of it based on the complexity demanded by running two regions, two footprints and that replication methods do not exist for all services – for example replicating a Virtual Machine vs no ability to replicate Azure Firewall natively. There is also cost of course, having two footprints, in theory means double your network costs. Unfortunately, as we all know, cost is only a challenge before an outage, you would have unlimited budget to recover!

Closing Recommendation

To sum up so – Azure Networking BCDR – Zonal Redundancy for a standard footprint is very achievable, and is definitely the way to go. If you need regional redundancy, try build ahead everything you can to mirror the primary region.