A regular starting point for most people when first using Azure, or any public cloud, is a virtual machine. Depending on your environment, VMs can be one of the most expensive resources. It’s no surprise that this can be a strong negative when considering a move to cloud.

Before anything is deployed, it’s important that you are aware of the tools that Microsoft make available to help you estimate your costs in advance. This can help both understand and avoid unwanted surprises with your bill.

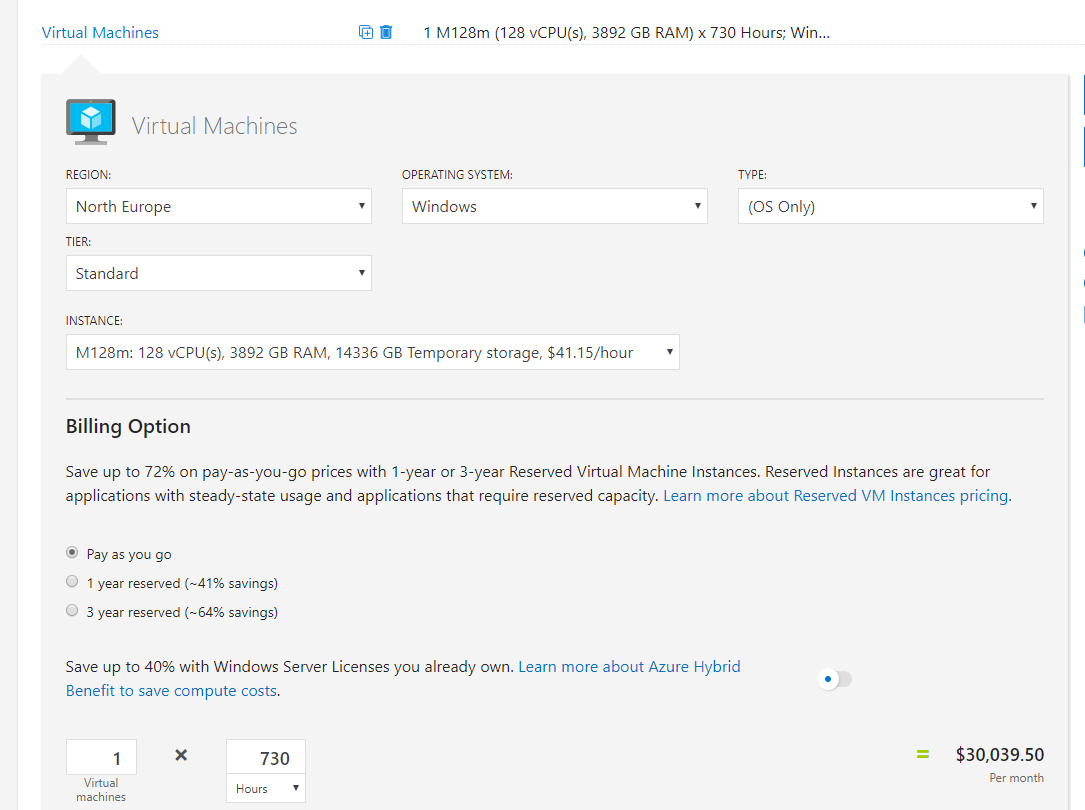

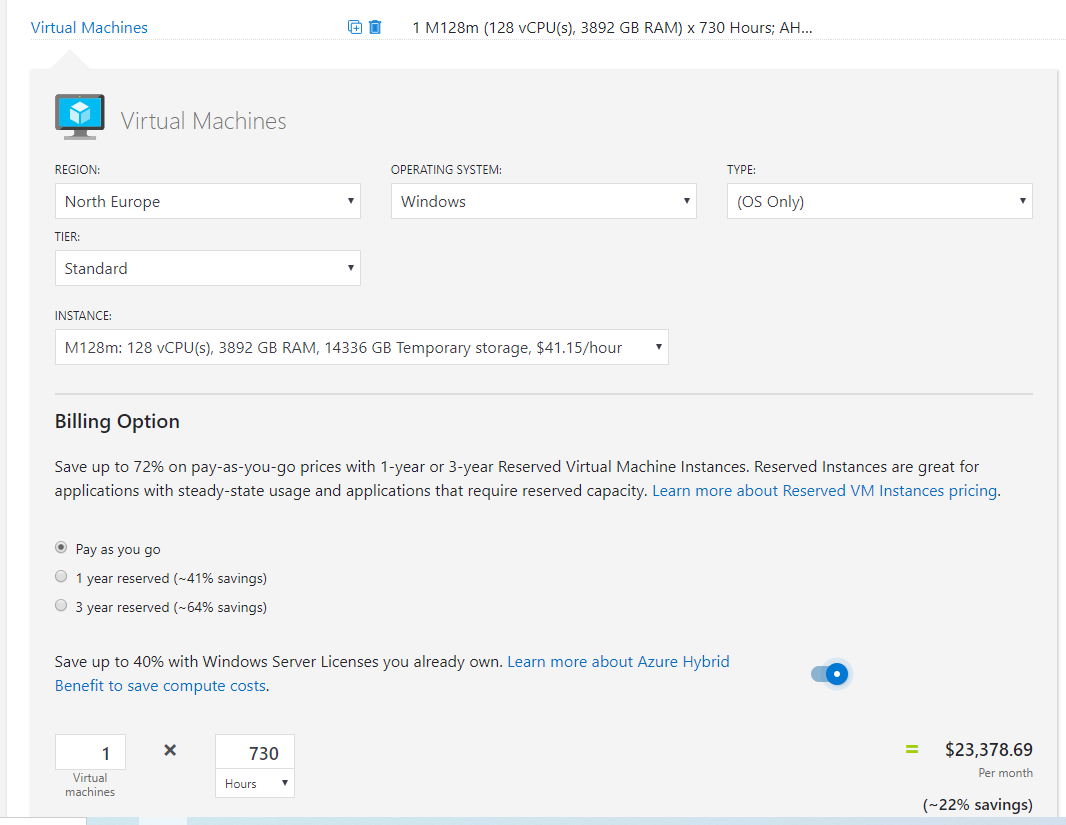

First up is the Azure Pricing Calculator, with a bit of work, you can achieve an acceptably accurate cost estimate for an environment. I normally choose the default settings when it comes to pricing options (such as PAYG) as it gives me the most expensive and therefore safest estimate for a quick quote. If you have access to other consumption offers, ensure you are signed in so you can access their rates.

For this post I’m going to use a single VM estimate to display cost and changes. As it’s a single VM I have chosen a beast – M128m

Once you have your worst case estimate, it’s time to start making some adjustments to get that price down as low as possible. To do this, I recommend the following three options.

- Reserved Instances

- Automation

- Hybrid Benefit

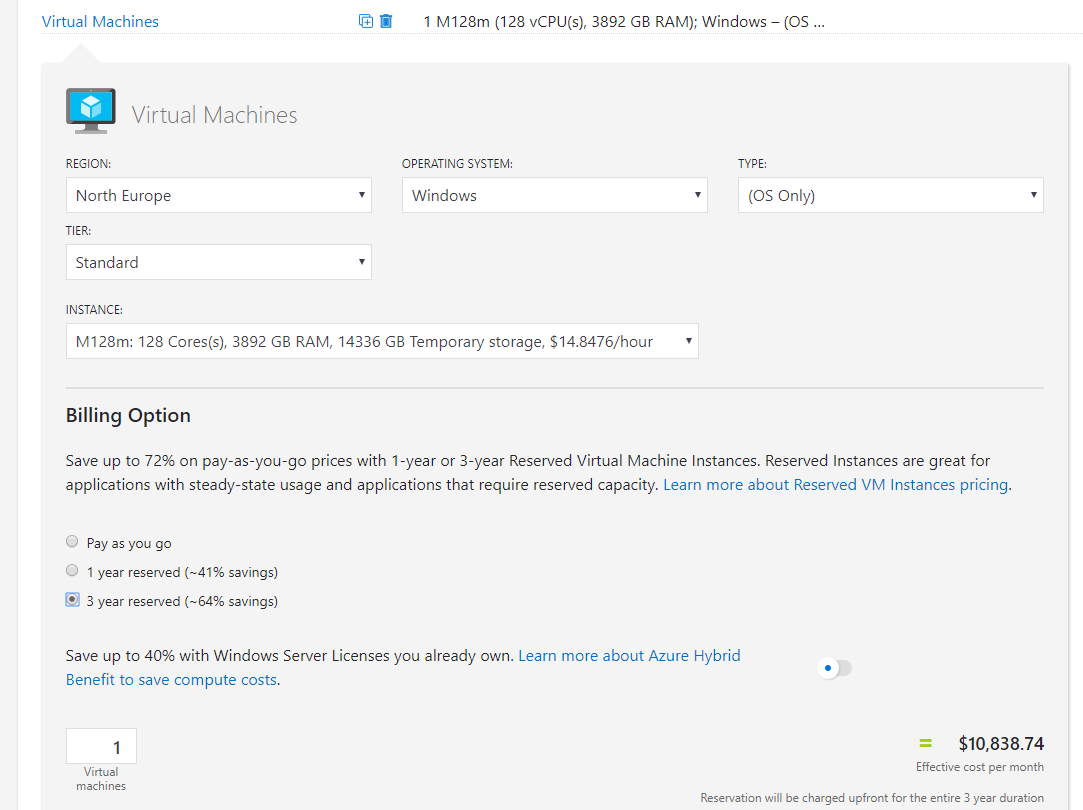

First up, and most straight forward – Reserved Instances. They are a billing object that allows you to save money over a fixed period of time by paying for the usage up-front. From the screen grab you can see the savings can be approx. 64% for a three-year reserved instance. I have an old post that is still valid on RIs over here.

Again, you will pay the entire price up front, but look at the difference it makes to the monthly rate for our beast:

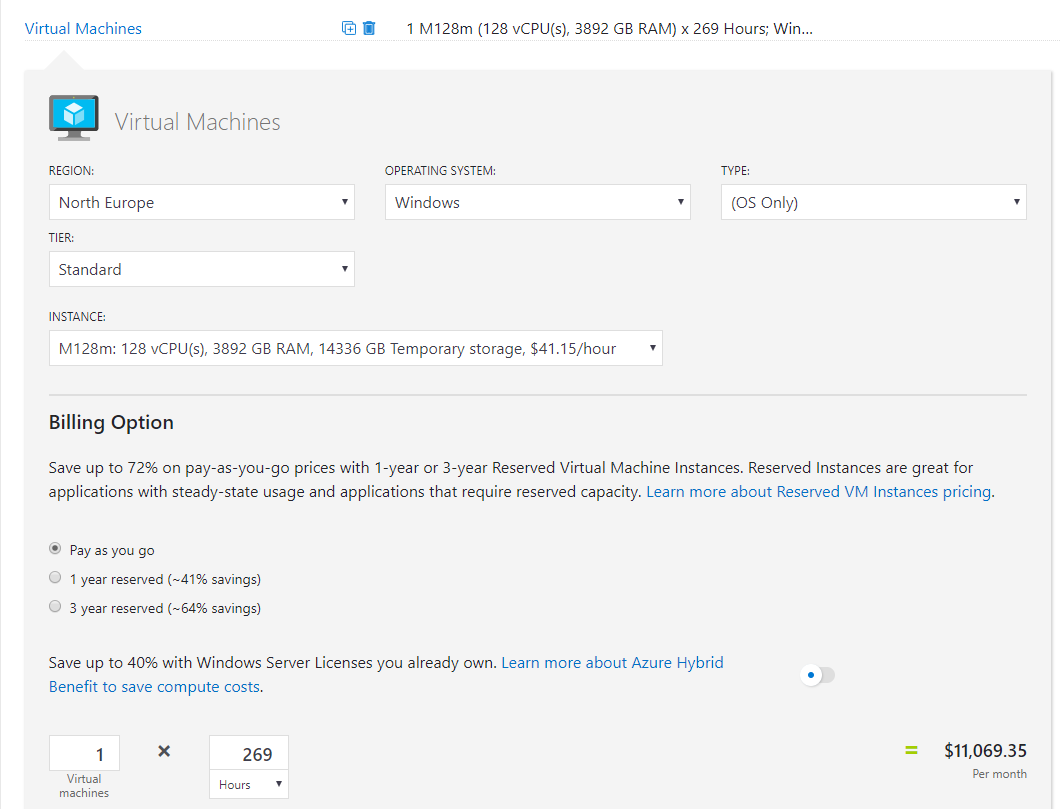

Next, modifying your usage hours using Automation. Now, this doesn’t have to be using Azure Automation and its Start/Stop solution as there are alternative like over on Azure MVP, Gregor Suttie’s blog. Whatever method you choose, update your usage hours in the cost calculator to see your savings, for this post I’m going to first remove weekends (average 8 days a month = 192 hours) and cut the remaining workdays in half (538/2). So instead of 730 hours, we get 269 hours and the appropriate reduction in price to our beast:

One thing to note at this point, if you’re using Reserved Instances, there is no point in using Automation to save on costs. RIs cover the full usage for the period.

Finally, the simplest to implement but arguably most complex option, Azure Hybrid Benefit. This is a licensing option that allows you to reuse your on-prem licenses in Azure. This is an option that can only be used in Azure and therefore a unique cost saving method. Applying it is simply a tickbox within your VM blade. Microsoft have a calculator to help you work out the licensing side of things, I’d recommend leaning on your LSP for this part as it can be a bit complicated and you need to make sure you’re compliant. You can see the savings below for our beast:

You’re probably already thinking it, can I layer these together and save even more? Absolutely.

Check out the reduction to the price of the beast if we apply AHB and a three year RI:

So what are you waiting for, head over to your Azure tenant and start saving some money on those VMs ASAP. As always, if there are any questions, get in touch!