Back in 2017, Microsoft announced the introduction of B-Series compute. Since then, the service offering hasn’t changed a huge amount but it is one of the most consistently misunderstood VM SKUs available.

Part of this is how they are displayed on the portal. Classed alongside the D series as “General purpose” but with a much more attractive price point, the B-Series appears to be a winner for all your workloads.

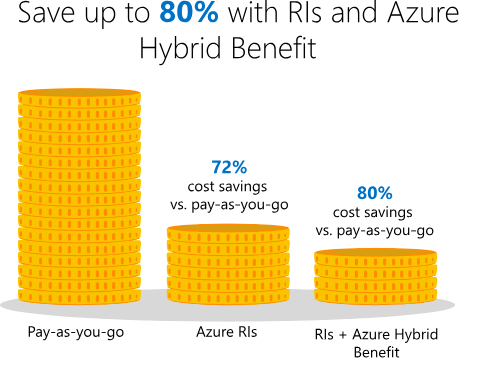

Comparing a B2ms and a D2s_V3, there is a clear saving oper month regardless of your consumption offer. You can see they have the same amount of vCPU and RAM. Which is the most common deciding factor when sizing a VM. However, the B-Series has some unique features.

The B-series VMs are designed to offer “burstable” performance. They leverage flexible CPU usage, suitable for workloads that will run for a long time using as small a fraction of the CPU performance as possible and then spike to needing the full performance of the CPU due to incoming traffic or required work.

There are currently 10 different SKUs available, although not in all regions. yet. I’ve listed the current specs as available below:

| Size | vCPU | Memory: GiB | Temp storage (SSD) GiB | Base CPU Perf of VM | Max CPU Perf of VM | Initial Credits | Credits banked / hour | Max Banked Credits |

|---|---|---|---|---|---|---|---|---|

| Standard_B1ls1 | 1 | 0.5 | 4 | 5% | 100% | 30 | 3 | 72 |

| Standard_B1s | 1 | 1 | 4 | 10% | 100% | 30 | 6 | 144 |

| Standard_B1ms | 1 | 2 | 4 | 20% | 100% | 30 | 12 | 288 |

| Standard_B2s | 2 | 4 | 8 | 40% | 200% | 60 | 24 | 576 |

| Standard_B2ms | 2 | 8 | 16 | 60% | 200% | 60 | 36 | 864 |

| Standard_B4ms | 4 | 16 | 32 | 90% | 400% | 120 | 54 | 1296 |

| Standard_B8ms | 8 | 32 | 64 | 135% | 800% | 240 | 81 | 1944 |

| Standard_B12ms | 12 | 48 | 96 | 202% | 1200% | 360 | 121 | 2909 |

| Standard_B16ms | 16 | 64 | 128 | 270% | 1600% | 480 | 162 | 3888 |

| Standard_B20ms | 20 | 80 | 160 | 337% | 2000% | 600 | 203 | 4860 |

So the the ability to burst sounds great for certain workloads, however, it obviously isn’t unlimited. While B-Series VMs are running in the low-points and not fully utilizing the baseline performance of the CPU, your VM instance builds up credits. When the VM has accumulated enough credit, you can burst your usage, up to 100% of the vCPU for the period of time when your application requires the higher CPU performance.

Here is a great example from Microsoft Docs of how credits are accumulated and spent.

“I deploy a VM using the B1ms size for my application. This size allows my application to use up to 20% of a vCPU as my baseline, which is .2 credits per minute I can use or bank.

My application is busy at the beginning and end of my employees work day, between 7:00-9:00 AM and 4:00 – 6:00PM. During the other 20 hours of the day, my application is typically at idle, only using 10% of the vCPU. For the non-peak hours I earn 0.2 credits per minute but only consume 0.l credits per minute, so my VM will bank .1 x 60 = 6 credits per hour. For the 20 hours that I am off-peak, I will bank 120 credits.

During peak hours my application averages 60% vCPU utilization, I still earn 0.2 credits per minute but I consume 0.6 credits per minute, for a net cost of .4 credits a minute or .4 x 60 = 24 credits per hour. I have 4 hours per day of peak usage, so it costs 4 x 24 = 96 credits for my peak usage.

If I take the 120 credits I earned off-peak and subtract the 96 credits I used for my peak times, I bank an additional 24 credits per day that I can use for other bursts of activity.”

So, there was quite a bit of maths there, what are the important points?

- Baseline vCPU performance – This dictates your earn/spend threshold so if current vCPU is under the baseline you’re increasing your credits. If it’s over, your decreasing them. If it’s the same, you will earn and spend credits at an equal rate with no change to credit balance.

- Peak utilisation consumption – If this is not allowing you to bank credits, you will eventually end up in a situation where you cannot burst so you might need to size up your VM.

- Automation – Doesn’t work here, you only earn credits when the VM is allocated. Re-allocating your VM will cause you to lose your credits banked and start again from the starting allocation.

- Starter Credit – You are allocated a starting credit which is (30 x “number of cores”)

You can monitor your credit spend and usage via Azure Monitor using specific Credit metrics. This will allow you to fire metric alerts relative to your VM. Very handy if you want to make sure you’re not pushing the performance consistently by mistake and therefore burning credits accidentally.

B-series compute, once understood correctly, is a great option to maximise cost efficiency in your environment. Once you’ve mastered the different approach required, you can make significant savings with relatively little effort.

There is a Q&A on some common topics here.