Depending on your level of permission on an Azure subscription, you may or may not have encountered Resource Providers directly. However, when you do, they can be a bit tricky. This post will hopefully clear up some of the most common issues and help you get working that bit quicker.

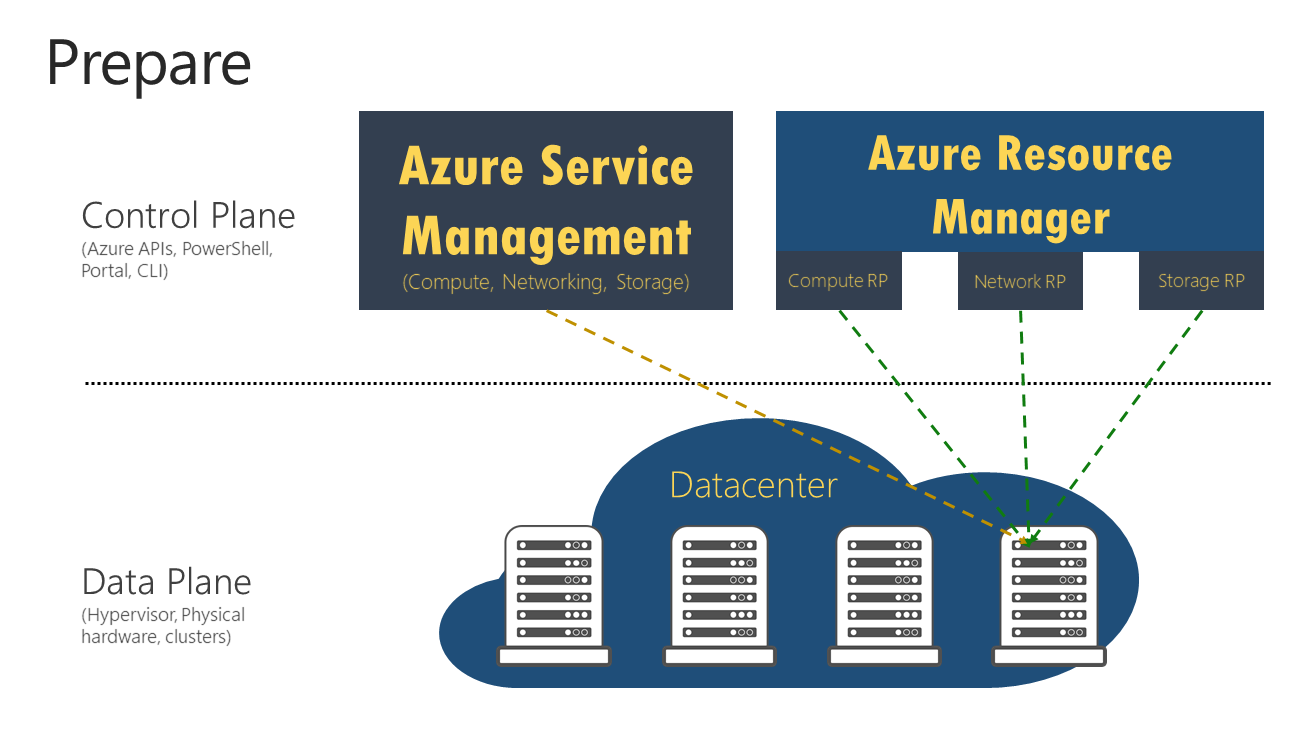

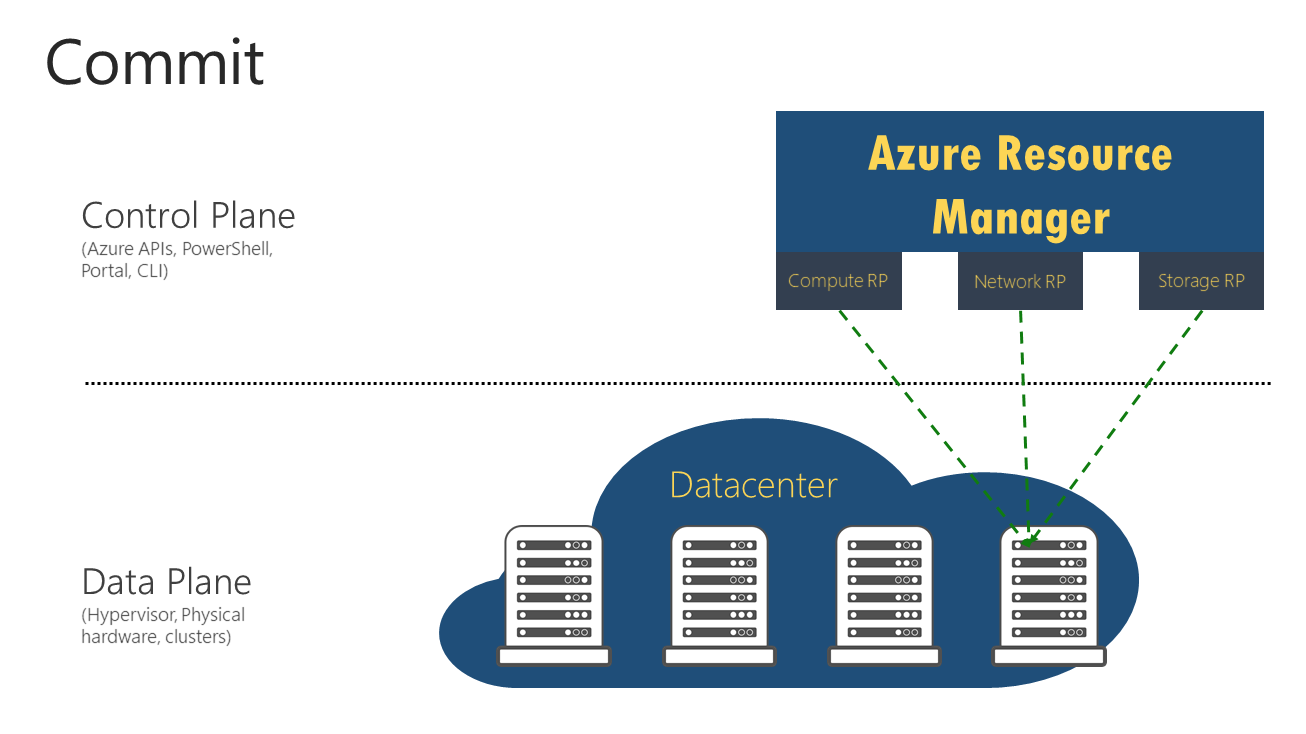

First up, what is an Azure Resource Provider? Simply put, it is a service within Azure Resource Manager that provides the resources you build. An example is Microsoft.Network which provides Virtual Networks among many others.

By default, if you have the correct role at a subscription level, Resource Providers are automatically registered. However, to register you need either Contributor, Owner, or a Custom Role with permission to do the /register/action operation. Resource Providers are always at subscription level and once registered, you can’t unregister when you still have resource types from that Resource Provider in your subscription.

So, in a scenario where you have an Owner role but only on a Resource Group within a subscription, you do not have permission to register Resource Providers.

Next, how do I check which Resource Providers are registered? There are a couple of ways to achieve this. You can simply check within the Portal, which gives some nice immediate visuals. Head to the Azure Portal, and navigate to your subscription. Scroll down to the Settings section and choose Resource Providers.

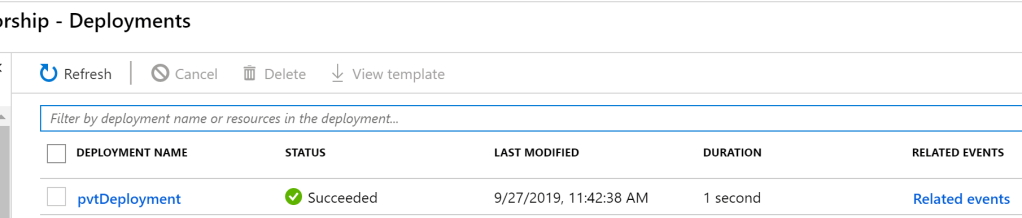

From here you can see a list of Registered, NotRegistered, and Registering providers. To register, simply click the relevant provider and choose Register at the top of the list. Similar for unregister once the previously mentioned caveat is met.

In some cases, you may want to avoid issues with NotRegistered providers and want to Register them all for a subscription. This can be achieved via the shell.

Log into Azure Powershell and choose your required subscription. Next run the following:

Get-AzResourceProvider -ListAvailable | Select-Object ProviderNamespace, RegistrationStateThis will list all resource providers, and the registration status for your subscription. You can get additional details on each provider including resources it supports and locations supported by running the commands detailed in this doc.

To register all providers at once, run the following:

Get-AzResourceProvider -ListAvailable | Register-AzResourceProviderThe shell will then cycle through all providers and list their status as it works its way through them all. Similar to below:

And that’s it! You now know how to check the status of your Resource Providers and how to enable them as needed. As usual, I can’t take any responsibility for commands provided in examples, please use at your own risk. But, if there are any questions, please get in touch!